Ridi, Pagliaccio !

Neural style transfer for videos.

Some time ago, I wrote a post on neural style transfer. Among other things, I argued that it was the perfect domain where people from the ‘‘hard sciences’’ and people from the humanities and social sciences could work together.

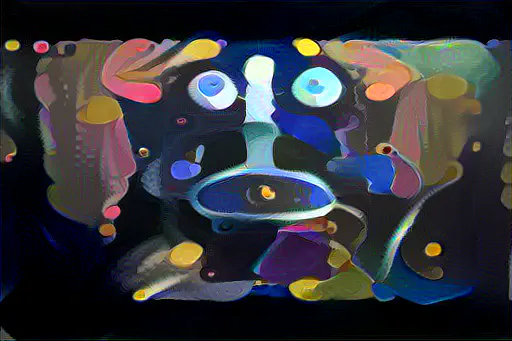

Today I’d like to exemplify this further by showing how I applied this technique to a pre-existing video .

The original video shows the Ohbot, a robot head I programmed in Python, lip-syncing to a famous tenor opera aria:

The transformed video contains 15 different styles. Apart from the very beginning and end of the video, the video frames were submitted to style transfer (each style lasts about 3 sec.). Here’s the list in random order:

- The Mona Lisa by Leonardo da Vinci

- Composition VI by Vassily Kandinsky

- The Weeping Woman by Pablo Picasso

- Portrait of Adele Bloch-Bauer I by Gustav Klimt

- Port en Normandie by Georges Braque

- La Couleur Tombée du Ciel by Marc Chagall

- Several Circles by Vassily Kandinsky

- (unknown title) by Victor Figol

- Tableau I by Piet Mondrian

- The Fox by Franz Marc

- La récolte des pommes à Éragny by Camille Pissarro

- Le Repas de Gargantua by Gustave Doré

- The Scream by Edvard Munch

- The Women of Algiers Version O by Pablo Picasso

- The Starry Night by Vincent van Gogh

ENJOY !!!